Software for News Content Monitoring and Analysis

Using Python / Django, Redis and Beautiful Soup to implement an application for news data gathering and analysis for the discernment of bias and reliability

Ottawa Dialogue is a university-based organization that brings together research and action in the field of dialogue and mediation. Guided by the needs of the parties in conflict, Ottawa Dialogue develops and carries out quiet and long-term, dialogue-driven initiatives around the world. As a complement to its field work, Ottawa Dialogue pursues a rich research agenda focused on conflict analysis, third party dialogue-based interventions, and best practices relating to “Track Two Diplomacy”.

In order to bring innovation to the field of monitoring and evaluation and augment the organizational capacity of conflict analysis, OttawaDialogue sought to develop a tool that streamlines the process of data collection, filtering and analysis.The related research projects involve analyzing a vast number of articles from across the web, and determining their bias and reliability along with their position in the google news.

The approach enables researchers to gain a better understanding of broader issues surrounding the current state of media on the web in relation to the ever-changing situation on the ground in conflict zones over long periods of time. Given the amount of data needed to conduct this type of research projects, there arose a need for designing and developing a specialized data collecting and processing software solution.

With major experience in the application of web technologies and data analysis, SoftKraft was engaged to develop a customized system that automates a number of tasks that are involved in the processing of news web contents and the significant amounts of data involved in the process.

Students, researchers, and educators often rely on news media for their work. Given the massive and fluctuating supply of news and news-like input, it is often difficult to discern which sources are more dependable than others. The tool built was meant to help guide research work by empowering users to discern reliability and bias, assess the contents analysed in terms of bias, identify sources that warrant confidence and stand up to critical review and thus also put the user in a position to produce more trustworthy research output.

Working collaboratively with the Client’s Product Owner, we looked deeply into how the whole process was structured before, examined various possible solutions that could improve it in a non-disruptive way and decided on a specific process design which was to be supported with the software.

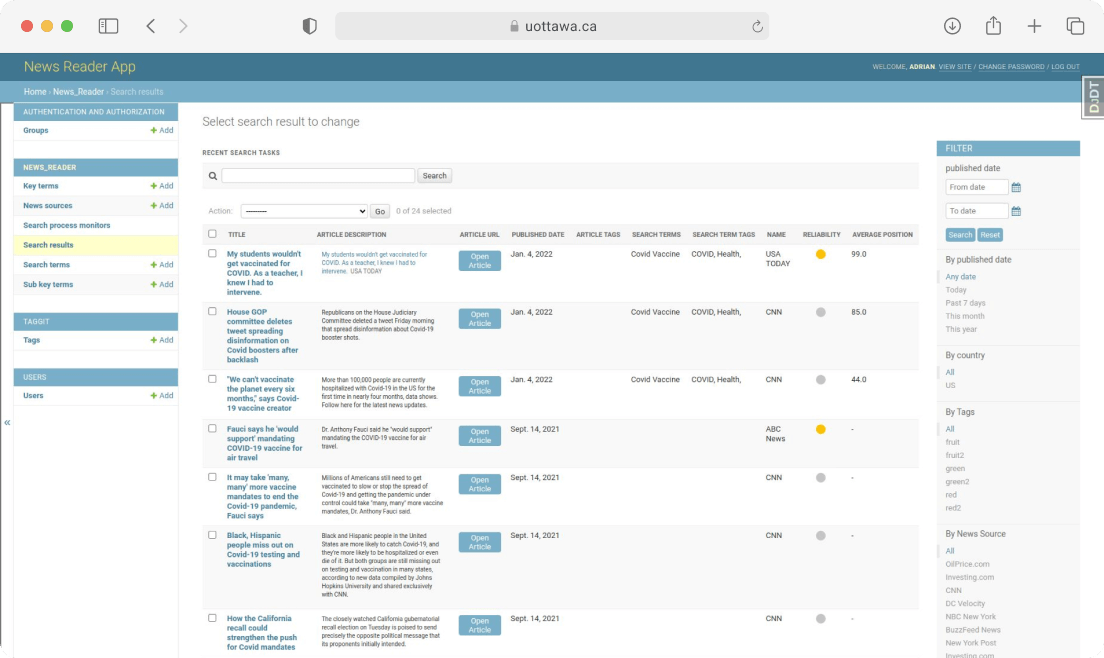

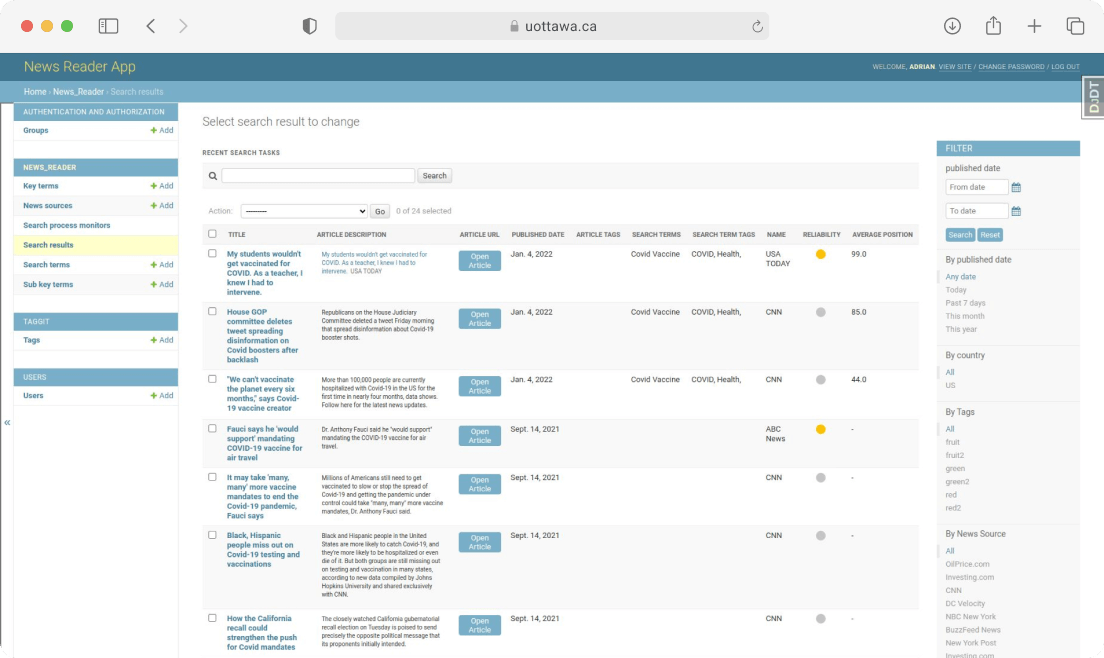

The solution was fitted out with some such functionalities as tools for creating and managing search terms lists with multiple customizable parameters, a tool for creating and managing news source websites and determining their reliability and bias as well as a user interface for filtering and managing the information gathered.

All in all, the implementation of the system enables the Client to focus more on the bigger picture and the significant overall improvement of the efficiency of the whole news data collection, processing and analyses.