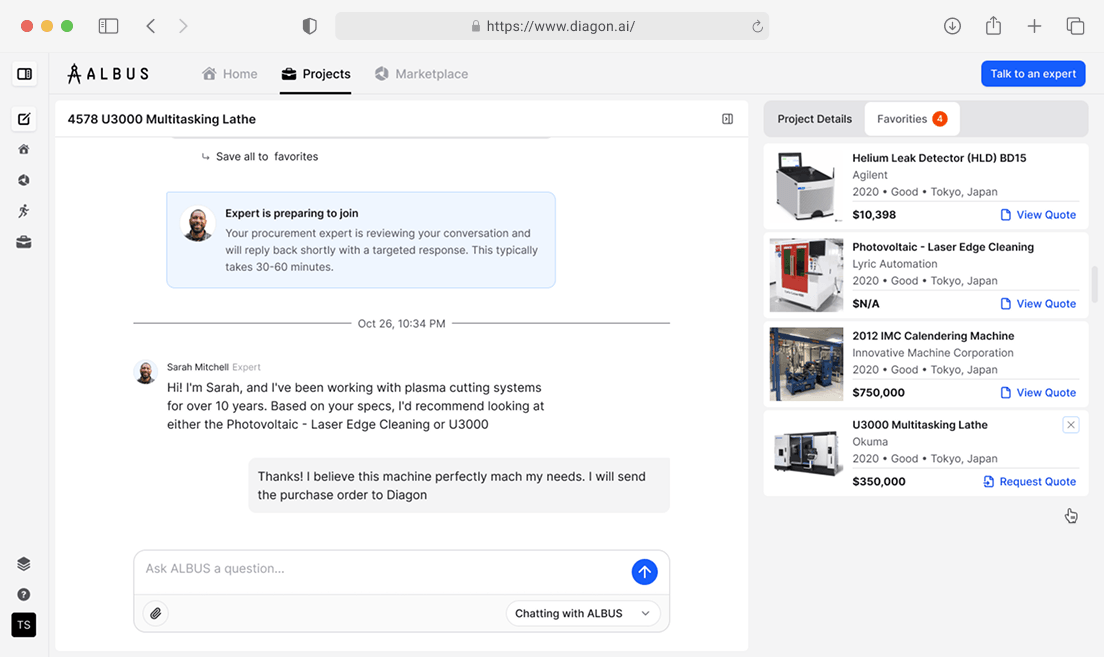

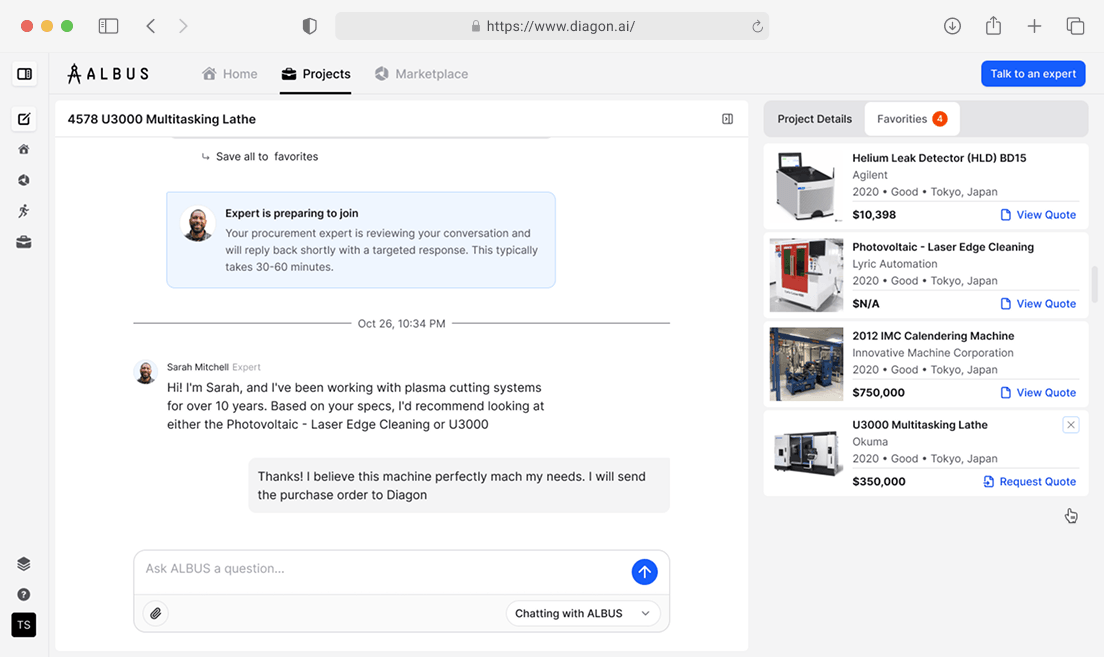

AI-Powered Supply Chain Marketplace

Building a scalable, autonomous data engine to index 32,000+ suppliers for a Series Seed startup

The client, Diagon, founded by ex-Tesla and Rivian veterans, set out to revolutionize the industrial machinery market by allowing manufacturers to source equipment "in minutes, not months".

While they had successfully validated their concept with a prototype, they faced a critical execution gap in scaling. The goal was to index and structure product data from over 32,000 disparate supplier websites—a task requiring massive scale and "human-like" reasoning to interpret unstructured technical specifications.

The existing infrastructure faced resource limits when handling heavy AI extraction workloads. To meet investor expectations, Diagon needed to transition from a lightweight MVP to a robust, enterprise-grade cloud architecture capable of autonomous decision-making to keep operational costs viable.

We structured the engagement as a progressive partnership, adopting a "delivery-first" model to build trust before scaling the team.

Collaboration Model We implemented a Dual-Stream Agile Workflow:

The partnership successfully evolved Diagon’s platform into a robust, investor-ready data engine capable of handling massive data volume with high precision.