The finance industry is rapidly embracing AI, with 91% of financial services companies either evaluating or actively deploying AI technologies. However, the sector faces unique challenges, primarily due to stringent sensitive data privacy laws and regulatory compliance requirements.

In this article we explore the top 10 risks associated with AI in finance, offering a detailed guide for firms aiming to leverage generative AI responsibly. We provide strategic insights for navigating the complex landscape of AI regulatory compliance, so that your organization can harness AI's transformative power while ensuring alignment with critical legal and ethical standards.

Diverging regulatory approaches for AI

Regulatory structures are becoming essential for overseeing the growth and application of AI. Various regions are implementing different rules for technology governance, mirroring their unique policy objectives, technical capabilities, and community principles. This creates a complex risk management environment for international businesses to maneuver through these differing rules.

United States (US)

The US approach to AI regulation has been relatively decentralized and sector-specific, with an emphasis on innovation and competitiveness:

- Federal guidance and sector-specific policies: The US has issued guidance and principles for AI development and use, with agencies like the FTC and FAA developing sector-specific regulations.

- Encouraging innovation: A focus on fostering innovation and maintaining technological leadership, with less emphasis on prescriptive regulation.

- Public-private partnerships: Collaboration between government, industry, and academia to guide the ethical development and deployment of AI technologies.

United Kingdom (UK)

The UK favors a principles-based, flexible approach to AI regulation, leveraging existing regulatory bodies and frameworks:

- Context-specific regulation: AI is regulated based on the outcomes it generates, integrating AI oversight into the existing regulatory regime.

- Five cross-cutting principles: Safety, security, transparency, fairness, and accountability guide AI integration and governance.

- No dedicated AI regulator: Existing regulators like the FCA and PRA will incorporate AI into their remits, avoiding the creation of a new, specific AI regulatory body.

European Union (EU)

The EU is pioneering a prescriptive and risk-based regulatory framework for AI, emphasizing consumer protection and ethical standards:

- AI Act: Proposes a comprehensive legal framework categorizing AI systems by risk levels, from unacceptable to minimal/no risk.

- Risk categories: Unacceptable, high-risk, limited risk, and minimal/no risk, with stringent requirements for high-risk and unacceptable AI systems and applications.

- European AI board: A new entity to ensure uniform application of the AI Act across member states, overseeing compliance and guidance.

Impact on firms in the financial sector

For firms within the finance sector, the rapid adoption of AI along with the diverging regulatory approaches to AI, present a complex landscape to navigate. This diversity in regulation strategies has significant implications:

- Finance companies must adapt their operations to comply with varied regulations in the jurisdictions they operate. This includes understanding and implementing different requirements for AI applications based on risk assessments, consumer protection, and ethical standards.

- Active engagement with regulators is crucial. Participation in consultations and discussions can help shape the regulatory landscape and ensure firms are well-prepared for upcoming changes.

- Financial firms need to enhance their risk company-specific management frameworks to include AI-related risks. This encompasses not only technological and operational risks but also regulatory compliance risks due to the use of AI.

Top 10 risks of generative AI in finance

In this section we’ll take a closer look at the top 10 key risks of generative AI models in finance along with some strategies to mitigate such risks effectively.

Data privacy

In the financial services sector, the use of generative AI and natural language processing raises significant data privacy concerns. Financial institutions must navigate a tightrope of regulatory requirements, such as GDPR and CCPA, to maintain compliance while pursuing innovation.

Strategies to mitigate:

- Implement strong data governance policies, ensuring data quality and source transparency.

- Provide clear options for individuals to control their personal customer data, including opt-out mechanisms.

- Enhance cybersecurity measures to protect against unauthorized data access to personal data or intellectual property.

Embedded bias

AI systems can replicate existing biases in their training data, leading to potential unfairness in financial sectors like credit, insurance, and investments. The "black box" nature of these complex algorithms makes it difficult for institutions to understand or correct biased decisions, complicating efforts to identify and amend embedded prejudices.

Strategies to mitigate:

- Regularly conduct audits on AI systems to evaluate fairness and bias across different demographic groups.

- Ensure that the data used to train AI systems is as diverse and representative as possible to minimize bias.

Robustness

When we think about AI tools or systems lacking robustness, we’re talking about them being prone to "hallucinations" or generating incorrect or misleading information. These hallucinations can lead to decisions based on inaccurate data, endangering investments and at worst - the overall financial stability of an organization.

This lack of reliability can undermine trust in automated financial analysis and decision-making processes. Additionally, generative AI tools that are not robust enough may fail to adapt to new types of financial fraud or novel market conditions, leaving financial institutions vulnerable to unanticipated threats. The consequences of these risks can range from financial losses to compromised customer trust and regulatory non-compliance, underscoring the critical need for robustness in financial AI applications.

Strategies to mitigate:

- Implement thorough verification protocols for AI-generated data.

- Communicate the limitations and capabilities of AI systems transparently to users.

- Invest in R&D for developing more resilient large language models.

Synthetic data

The infusion of synthetic data in financial AI applications offers a significant advantage by enabling financial institutions to innovate and develop AI models without compromising on privacy, particularly in environments where real data is scarce or sensitive. However, while synthetic data allows for model training in scenarios where actual data is limited or privacy risks are prevalent, the core issue remains that it might not fully capture the nuances and complexity inherent in genuine financial datasets.

Strategies to mitigate:

- Establish benchmarks for the accuracy and realism of synthetic data.

- Enhance validation protocols to ensure fidelity to real-world financial conditions.

- Clearly disclose the use and methodology behind synthetic data generation.

Explainability

In finance, the necessity for AI systems to be explainable is critical, as decisions related to product development, risk management, compliance, and customer relations require transparency to satisfy regulatory and stakeholder scrutiny. A robust financial framework must clearly articulate the reasoning behind decisions. Without such explainability, AI risks becoming a "black box," making it challenging to ensure compliance, check biases, and maintain trust among customers and the public.

Strategies to mitigate:

- Invest in and deploy AI models and tools that prioritize explainability, allowing for clear understanding of how decisions are made.

- Engage in ongoing dialogue with regulatory bodies to ensure AI systems are developed and used in compliance with existing laws and guidelines.

Cybersecurity

Cybersecurity is pivotal for financial firms using AI, as it's essential to protect AI infrastructures and data integrity against cyber threats. The integration of AI with various technologies increases vulnerability to attacks, risking data breaches and financial losses. Ensuring data accuracy and safeguarding sensitive information are key to maintaining a secure financial environment.

Strategies to mitigate:

- Implement robust encryption and access control systems to protect data and limit unauthorized access.

- Regularly update and patch systems to address vulnerabilities and prevent potential cyberattacks.

- Conduct continuous security assessments and audits to identify and rectify weaknesses in the AI infrastructure.

Sustainability

The energy-intensive nature of extensive AI operations, especially in deep learning and other complex models used in finance, has highlighted the environmental implications of such technology. This growing concern underscores the need to balance AI's computational benefits with its ecological footprint, prompting a search for more sustainable practices in the AI industry.

Strategies to mitigate:

- Select hardware and providers that prioritize sustainability

- Invest in sustainable AI research to develop low-power models and improve algorithm efficiency.

Market manipulation

Competitive pressures drive the use of AI for potential financial market manipulation, creating risks of artificial asset price changes. This advancement in AI poses challenges in combating fraud and ensuring market integrity, as it can lead to deceptive trades affecting investor trust and market balance. The complexity of detecting such AI-driven manipulation requires firms to maintain vigilance, utilize advanced detection tools, and adhere to strong regulatory frameworks to ensure market transparency and fairness.

Strategies to mitigate:

- Train AI systems to detect and resist manipulative patterns robustly.

- Implement continuous monitoring and validation to ensure AI integrity.

- Conduct regular audits and establish safety thresholds to prevent harmful AI activities.

Customer trust

Trust is essential in customer-financial entity relationships, and a lack of transparency or perceived unfairness in AI applications can erode this trust. Customers demand clear and fair decision-making; any opacity can lead to skepticism. To maintain trust, institutions must keep a human-centric approach, ensuring human oversight in AI systems to balance technology with a personal touch and accountability. Having a responsible human in the loop, despite automation, reassures customers that their interests are protected and decisions can be reviewed and explained personally.

Strategies to mitigate:

- Develop clearer communication strategies around their AI applications, explaining how these systems work, the data they use, and how decisions are made.

- Establish strong human oversight mechanisms within AI systems ensures that decisions can be reviewed, interpreted, and, if necessary, overridden by a human.

Workforce competency

As financial institutions increasingly use AI for various purposes, including risk management and customer service, the importance of a workforce skilled in managing, understanding, and ethically using these technologies is critical. A lack of necessary skills can lead to mismanagement, oversight failures, and missed opportunities, affecting operational efficiency and raising ethical and compliance risks. The fast pace of AI technology development requires continuous workforce education to prevent a growing gap between technological advancements and human expertise. This gap risks over-reliance on AI, potentially leading to biases, errors, or ethical issues in financial decision-making.

Strategies to mitigate:

- Foster partnerships with educational institutions to develop specialized AI curricula for finance professionals.

- Launch intra-departmental training programs to help staff more confidently embrace AI.

- Work with an outsourced vendor to acquire technical AI talent quickly.

AI risk management frameworks

There has been an accelerated uptake of AI by organizations throughout the financial services industry. In line with this trend, we’re seeing an intensifying focus on the need for fairness, ethical consideration, accountability and transparency (FEAT) in AI and data analytics-powered decision-making.

Industry leaders worldwide create standardized, consistent principles governing the use of AI and its outcomes:

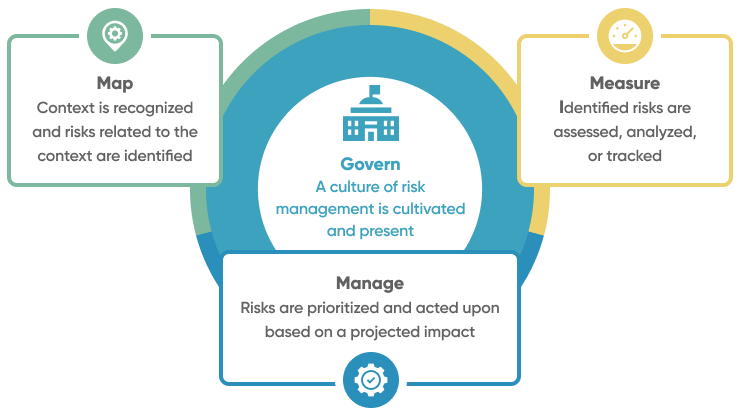

NIST AI Risk Management Framework

The NIST AI Risk Management Framework (AI RMF), released by the National Institute of Standards and Technology (NIST) in January 2023, is a comprehensive set of industry-agnostic guidelines. Its primary purpose is to assist organizations in assessing and managing the risks associated with the implementation and use of artificial intelligence (AI) systems.

The framework was developed through a consensus-driven, open, and transparent process. It involved input from various stakeholders, workshops, and public comments. Collaboration ensures a holistic approach to risk management.

Key Objectives

- Trustworthiness integration: The AI RMF aims to enhance the trustworthiness of AI products, services, and systems by helping organizations build AI solutions that are reliable, secure, and ethical.

- Voluntary adoption: The framework is designed for voluntary use, allowing organizations to adopt it based on their specific needs and risk profiles.

- Lifecycle approach: The AI RMF emphasizes risk management at each stage of the AI lifecycle, ensuring ongoing monitoring and improvement.

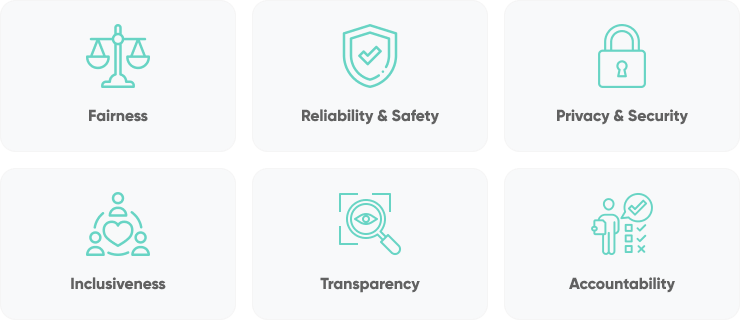

Microsoft Responsible AI Standard

The Microsoft Responsible AI Standard establishes comprehensive guidelines for ethical AI development and deployment within Microsoft, aiming to embed responsibility into the lifecycle of AI systems. It emphasizes the development of AI in alignment with Microsoft's core principles of fairness, reliability, privacy, security, inclusiveness, transparency, and accountability. This initiative seeks to ensure that AI technologies benefit society while addressing potential risks and ethical concerns.

Key Objectives

- Internal Microsoft guidance: It was initially created to provide Microsoft’s own AI team and AI developers with concrete and actionable guidance.

- Industry support: The framework intends to share what Microsoft has learned, invite feedback from others, and contribute to the discussion about building better norms and practices around AI.

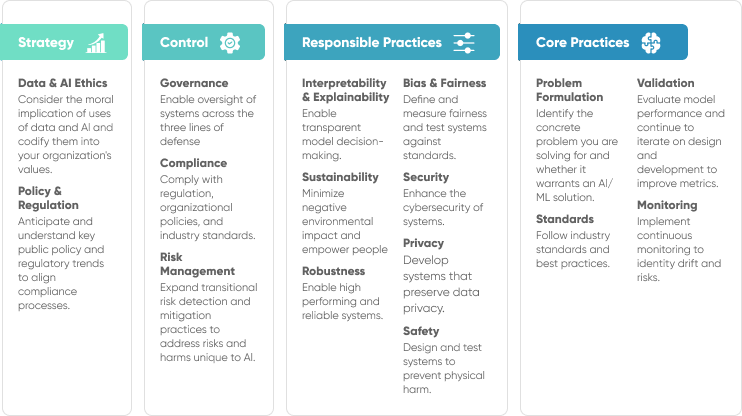

PwC Responsible AI framework

The PwC Responsible AI framework offers a customizable toolkit that helps organizations harness AI ethically and responsibly. It covers various dimensions, including governance, compliance, risk management, bias, fairness, interpretability, privacy, security, robustness, safety, data ethics, and policy regulation.

Key Objectives:

- Trustworthy AI: Foster trust by integrating ethical considerations into AI practices.

- Risk management: Identify, assess, and mitigate AI-related risks.

- Fairness and transparency: Address bias, fairness, and interpretability.

- Compliance and safety: Adhere to regulations and prioritize safety.

KPMG Trusted AI Framework

The KPMG Trusted AI Framework offers guidance for ethical and responsible AI deployment, emphasizing core principles such as fairness, transparency, explainability, and accountability. It stresses the importance of operational integrity by ensuring data integrity, reliability, security, safety, privacy, and sustainability. The framework advocates for stakeholder collaboration across organizations to align AI deployment with ethical standards and legal requirements.

Key Objectives:

- Provide a “north star”: The goal of the KPMG framework is to provide guidance for organizations to build and expand their generative artificial intelligence initiatives through the use of safe and ethical practices.

- Bring trusted AI practices to life: KPMG aims to provide best practices for every stage of the deployment lifecycle, so they can become part of organizations’ DNA.

Conclusion

The advent of generative AI in finance services heralds a new era of complexity and opportunity, underscored by the myriad risks it introduces. Financial institutions must adopt comprehensive risk management frameworks, foster a culture of ethical AI use, and engage in continuous learning to address these challenges effectively. By doing so, they can harness the transformative power of AI while ensuring the safety, fairness, and integrity of their operations, ultimately contributing to a more robust and resilient financial ecosystem.