According to a recent Wakefield Research study, enterprise data engineers spend nearly 50% of their time building and maintaining data pipelines. With data volumes continuing to grow exponentially, this manual effort is not sustainable. Automating data engineering processes is now more essential than ever for enhancing efficiency, reducing resource strain, and enabling engineers to focus on more strategic tasks.

In this article, we'll tackle the basics of data engineering automation and break down the top 7 opportunities that engineering teams can leverage to automate their workflows and streamline data management.

What is data engineering automation?

Data engineering automation refers to the use of technology and software tools to streamline and automate the various processes involved in managing and manipulating data. This encompasses tasks such as data extraction, transformation, loading (ETL), data integration, and data pipeline management.

Data engineering automation leverages extensive metadata concerning the data sources, data structure, historical patterns, frequency of data changes, and data consumers' needs. This vast metadata pool is then leveraged to guide and refine the actions of the system.

Data automation vs. data orchestration

While data automation and data orchestration are often used interchangeably, they represent different aspects of the work data engineers and data scientists do to manage data workflows.

Data automation involves the use of data tools and scripts to automatically execute repetitive data-related tasks without manual intervention. For instance, data engineering teams can automate the extraction of data from various sources, transform it into a usable format, and load it into a data warehouse. This helps in reducing human error, speeding up data operations, and ensuring data consistency.

Data orchestration, on the other hand, refers to the coordination and management of automated data workflows. It involves the seamless integration of various automated tasks into a cohesive workflow that ensures data moves smoothly and efficiently from one process to another. Orchestration tools help data engineers manage dependencies, scheduling, and error handling, making sure that the entire data pipeline operates harmoniously and efficiently.

Business benefits of data engineering automation

In this article we’ll be focusing on data engineering automation. With that in mind, let’s focus on some of the key benefits companies can achieve by automating data processes:

- Increased efficiency and productivity: Automating data engineering tasks significantly reduces the time and effort required to manage the data flow.. This leads to increased efficiency and productivity as data teams can focus more on data automation strategy rather than repetitive, time-consuming tasks.

- Improved data quality and consistency: Automation ensures that data processing tasks are performed consistently and accurately, reducing the likelihood of human errors.

- Scalability: Automated data engineering solutions can easily scale to handle growing volumes of data. As businesses expand, their data requirements also grow, and automation helps manage this increase without a proportional rise in manual effort.

- Faster time to insights: Automation accelerates the data processing cycle, enabling data engineering teams to access insights more quickly. This rapid access to data-driven insights allows companies to respond faster to market changes and make timely data driven decisions.

7 top opportunities for data engineering automation

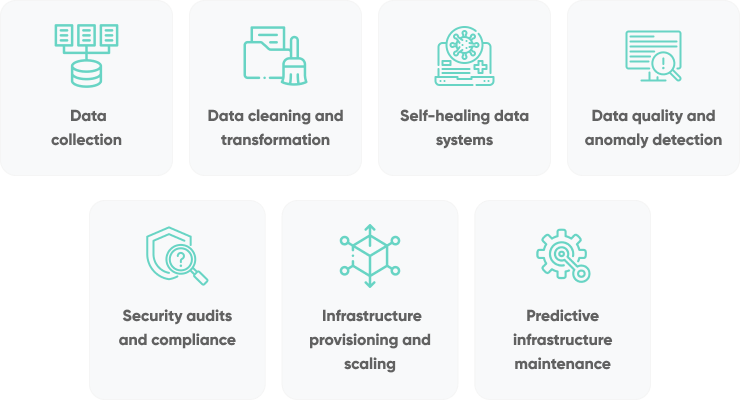

Data automation offers businesses a range of compelling benefits, but where is the best place to start building an automation strategy? In this section we’ll look at seven top opportunities for data engineering automation that can serve as a strong starting point for data engineering teams in 2024:

Data collection

The more data sources, the more complicated (and time-consuming) the data collection process becomes. From document data extraction to marketing and sales data, managing multiple data streams manually is prone to errors and inefficiencies. However, by automating these processes, companies can ensure that they are consistently collecting up-to-date and accurate information without the need for manual intervention.

To automate this kind of data collection, companies can leverage a data pipeline that integrates with various APIs and data streams. The process typically involves using tools like Apache NiFi or AWS Glue to extract data from different sources. This data is then transformed and cleaned to ensure it meets the required quality standards (we’ll look at this in more detail in the next section!).

Finally, the data is loaded into a data lake, such as Amazon S3 or Azure Data Lake Storage, where it can be stored in a raw format for further processing and analysis.

Automating these steps reduces the risk of human error, ensures timely data collection, and allows for scalable data storage and retrieval.

PRO TIP: AI-powered anomaly detection tools like Microsoft’s AI Anomaly Detector can help identify and flag unusual patterns or discrepancies in data streams, ensuring higher data quality and reliability. By integrating AI into your automated data pipeline, you can significantly boost the efficiency and intelligence of your data engineering processes.

Read More: 8 Intelligent Document Processing Tools With The Best Accuracy

Data transformation and cleaning

Automated data transformation and cleaning are critical components of the data engineering process, ensuring that raw data is converted into a usable format while maintaining high quality. By leveraging advanced tools and algorithms, a data engineering team can streamline these processes, significantly reducing manual effort and minimizing the risk of human error. Automated data cleaning solutions use rule-based algorithms and machine learning to identify and rectify issues in the data system such as incomplete, incorrect, inaccurate, or irrelevant data.

Key types of data cleaning tasks include:

- Removing duplicates and irrelevant data: Eliminating duplicate records and observations that do not fit the scope of analysis helps to focus the dataset, making it more manageable and relevant.

- Fixing structural errors: Correcting formatting errors in data types (e.g., text instead of date), values, codes, IDs, etc., to maintain data integrity. This can be done using functions in spreadsheets or specialized tools like OpenRefine.

- Detecting and removing outliers: Identifying data points that are abnormally high or low compared to the overall distribution. Statistical methods help assess if they are true outliers that need to be filtered out.

- Handling missing data: Determining and replacing empty cells through data imputation or removing rows with many blank fields. Estimates like averages or other statistical methods can be used for imputation.

- Validating and checking data quality: After cleaning, validating that changes did not introduce new errors is essential. Quality checks with profiling tools help visualize patterns that may require further fixing.

PRO TIP: To implement automated data transformation and cleaning, consider using a tool such as:

- Akkio is a comprehensive machine learning platform that automates data preparation, transformation, analytics, and forecasting with advanced AI-driven data cleaning.

- WinPure specializes in data cleansing and management, focusing on identifying duplicates, standardizing formats, filling missing values, and validating against business rules.

- Integrate.io offers over 300 pre-built connectors and provides robust data cleaning capabilities like data validation, standardization, and duplicate matching for large datasets.

Creating self-healing data systems

In 2024, the development of self-healing data systems represents a significant opportunity for businesses looking to enhance their data infrastructure's reliability and resilience. Self-healing systems are designed to automatically detect, diagnose, and rectify data issues without human intervention. This proactive approach ensures that data pipelines remain robust and operational, even when there are unexpected disruptions or errors.

Implementing self-healing data systems involves integrating AI and machine learning technologies that continuously learn from past incidents and improve their diagnostic and corrective capabilities over time. As these systems evolve, they become increasingly adept at handling a wide range of data issues autonomously.

For businesses of all sizes, but especially for those with complex data environments, investing in self-healing data systems is a strategic move, helping to ensure your data infrastructure remains resilient and capable of supporting critical operations, even as data volumes and complexities continue to grow.

PRO TIP: Consider data pipeline tools with self-healing capabilities built in such as:

- Datadog is a continuous end-to-end testing platform that automatically tracks UI changes in an application and adjusts to changes with no user intervention.

- Apache Airflow is an open-source workflow management tool that allows for automated monitoring and corrective actions within data pipelines through customizable scripts.

- StreamSets is an enterprise data integration and management platform with support for “smart” data pipelines with self healing capabilities.

Data quality and anomaly detection

Maintaining high data quality and effectively detecting anomalies are crucial for any data-driven organization. Automated tools for data quality and anomaly detection can help streamline these processes, ensuring that data remains accurate and reliable. These tools use advanced algorithms and machine learning to identify inconsistencies, errors, and unusual patterns in data, allowing for timely corrections and better decision-making.

Key components of data quality and anomaly detection:

- Real-time monitoring: Continuously monitor data for anomalies, such as sudden changes or deviations from expected patterns.

- Error identification: Automatically detect and flag incomplete, incorrect, or inconsistent data entries.

- Predictive analytics: Use historical data and machine learning models to predict and prevent potential data quality issues.

Security audits and compliance

Automating security audits and compliance processes presents a significant opportunity for businesses to enhance their data security and ensure adherence to regulatory standards. Automation tools can streamline the monitoring, detection, and reporting of security vulnerabilities, reducing the risk of data breaches and non-compliance penalties.

Implementing automated security audit tools can significantly reduce the workload on IT and compliance teams. These tools can identify and mitigate threats faster than manual processes, ensuring a more secure and compliant data environment. Key components of automated security audits and compliance include:

- Continuous monitoring: Automated systems continuously monitor for security threats and compliance issues, providing real-time alerts and reports.

- Vulnerability detection: Use advanced algorithms to identify potential security weaknesses and anomalies in the data infrastructure.

- Automated reporting: Generate detailed compliance reports automatically, ensuring timely and accurate documentation for regulatory requirements.

PRO TIP: To automate security audits and compliance processes, consider using tools such as;

- Solarwinds Papertrail is a comprehensive log manager that provides access to archives for auditing.

- LogicGate is a cloud-based IT risk assessment system that can help automate compliance auditing tasks.

Infrastructure provisioning and scaling

Infrastructure provisioning and scaling are critical for handling the ever-increasing data workloads in modern enterprises. Automating these processes offers significant benefits in terms of efficiency, reliability, and cost management.

Automated provisioning tools can rapidly and consistently set up computing environments, reducing setup time and minimizing human error. This ensures that resources are allocated and configured optimally, meeting specific requirements without manual intervention.

Scaling involves adjusting computing resources to match workload demands. Automation tools enable seamless auto-scaling, where resources are dynamically added or removed based on real-time needs. Cloud providers' auto-scaling features, along with load balancers, ensure that applications remain performant and reliable, even during peak usage. Predictive scaling, leveraging machine learning, anticipates future demands and adjusts resources proactively, enhancing responsiveness and cost efficiency.

PRO TIP: Several tools and platforms facilitate automated infrastructure provisioning and scaling, including:

- Infrastructure as code (IaC): Tools like Terraform, AWS CloudFormation, and Google Cloud Deployment Manager allow you to define your infrastructure in code, enabling version control, repeatability, and consistency in provisioning environments.

- Configuration management: Tools such as Ansible, Puppet, and Chef automate the deployment, configuration, and management of applications and infrastructure, ensuring that systems are configured to a desired state.

- Auto-scaling services: AWS Auto Scaling and Azure Autoscale automatically adjust the number of compute resources based on demand, ensuring applications maintain performance and availability during varying load conditions.

- Container orchestration: Kubernetes and Docker Swarm manage containerized applications, providing automated deployment, scaling, and operations of application containers across clusters of hosts.

Predictive data infrastructure maintenance

Predictive data infrastructure maintenance leverages advanced analytics and machine learning to anticipate and address potential failures before they occur. This proactive approach involves continuously monitoring infrastructure components, analyzing historical data, and using predictive models to forecast maintenance needs. By identifying issues early, businesses can take measures to maintain system health, reduce downtime, and optimize performance.

Predictive analytics use historical and real-time data to forecast potential failures and maintenance requirements. Continuous monitoring detects early signs of wear and anomalies, allowing for timely interventions. Automated systems generate alerts and trigger predefined actions when potential issues are detected, ensuring swift and effective responses.

PRO TIP: Consider using tools like IBM Maximo, Splunk, and Microsoft Azure Monitor. These platforms analyze performance data to predict and prevent failures, providing automated alerts and actionable insights.

Read More: Generative AI vs Predictive AI: 7 Key Distinctions

Data engineering automation with SoftKraft

At SoftKraft we offer data engineering services to help teams modernize their data strategy and start making the most of their business data. We’ll work closely with you to understand your unique automation challenges and map out a strategic solution that addresses your specific needs, so you can maximize your ROI. At the end of the day, the only metric that matters to us is project success.

Conclusion

Incorporating data engineering automation into your business strategy offers unparalleled benefits, from increased efficiency and scalability to enhanced data quality and faster insights. By leveraging advanced tools and technologies, companies can stay competitive in a data-driven world, making informed decisions swiftly and effectively.