The "should we adopt AI?" debate is over. AI in most large organisations is now a budgeted, governed workload that sits alongside cloud and observability in the enterprise stack. The harder questions are which architectures to commit to, how to ship without legal blocking, and which workloads actually justify their inference cost at scale.

This article surveys the 12 trends that are defining how enterprises build, govern, and pay for AI right now: multi-agent orchestration, MCP adoption, governance as a procurement blocker, the build-vs-buy reassessment driven by model commoditisation, and the operational disciplines that separate production AI from pilots.

- The state of enterprise AI adoption

- Key challenges enterprises are facing with AI adoption

- 12 Enterprise AI Trends Shaping 2026

- 1. AI as a procurement line item, not an innovation budget

- 2. Multi-agent orchestration moves into production

- 3. MCP becomes the interop standard for tools and data

- 4. AI governance and compliance as a procurement blocker

- 5. Build-vs-buy reassessment driven by model commoditisation

- 6. Document extraction matures into production IDP

- 7. Retrieval moves from static RAG to agentic retrieval

- 8. Human-in-the-loop tightens around the high-risk steps

- 9. Customer service moves from chatbots to action-taking agents

- 10. Agent orchestration platforms displace early process automation

- 11. The AI hire profile narrows to "ships agents in production"

- 12. AI-native development tooling becomes a hiring filter

- Conclusion

The state of enterprise AI adoption

In 2026, the experimentation phase is firmly behind us. Enterprises that started exploring generative AI three years ago have either moved workloads into production or quietly shelved the pilots. What remains is a more pragmatic conversation: which capabilities are worth owning, which to rent, and how to govern any of it at scale.

Three shifts characterise where most enterprise AI programmes sit today:

- Procurement maturity. AI spend is now budgeted alongside cloud and observability, not pulled from discretionary innovation funds. That changes who signs off and what evidence they expect.

- Architecture decisions over capability exploration. The frontier models are good enough for most enterprise tasks. The strategic question is no longer "what can the model do?" but "where does this fit in the stack, and what do we own?"

- Governance as a gating function. Compliance, risk, and legal teams now sit upstream of procurement, not downstream. EU AI Act obligations and NIST AI RMF have moved governance from a future concern to a present blocker.

Key challenges enterprises are facing with AI adoption

Enterprises building on LLMs and generative AI are running into the same set of operational concerns:

- Data: Retaining proprietary expertise, protecting corporate IP from training-data leakage, and controlling what flows into vendor systems.

- Customisation and flexibility: Adapting models to internal data and workflows without locking into a single vendor's fine-tuning stack.

- Governance: Controlling access to sensitive information and producing the audit trails compliance and procurement now demand. EU AI Act and NIST AI RMF have made this a real workflow, not a vague concern.

- Cost discipline: Inference costs are no longer a footnote. At production volume, the difference between a routed model and a default frontier model is measurable on the P&L. See AI costs for the breakdown.

- Security and compliance: Vendor questionnaires, data residency, and tenancy isolation now gate purchases. Procurement won't sign without them.

12 Enterprise AI Trends Shaping 2026

The list below reflects what enterprises are actually buying, building, and arguing about today, not the capabilities still being demoed.

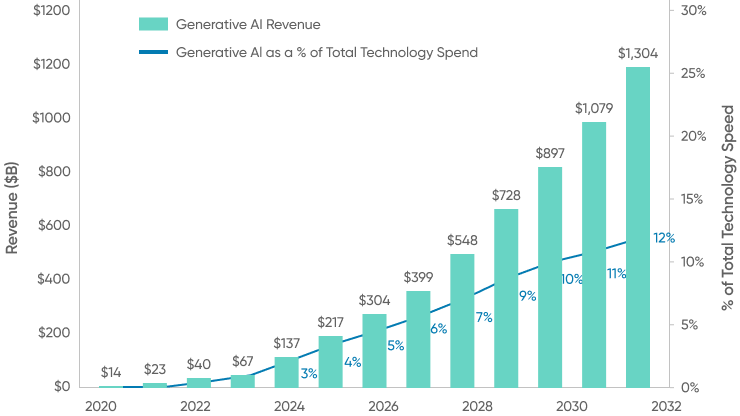

AI as a procurement line item, not an innovation budget

AI spend has graduated from "experiment" to "category." It now sits alongside cloud, observability, and identity in the enterprise stack, with its own approval workflows, vendor evaluations, and renewal cycles. The shift matters because it changes who decides: finance, legal, and security are now in the room before engineering picks a vendor.

The practical implications:

- Multi-year contracts and committed usage discounts are now the norm with the major model vendors, mirroring how enterprises have always bought cloud capacity.

- Internal chargeback for AI usage is becoming standard. Teams that were happy to burn shared credits during early pilots are now seeing inference costs allocated back to their business unit.

- Vendor consolidation is starting. The same buyers who once ran proofs of concept against five model providers now standardise on one or two, with a documented exit path.

Multi-agent orchestration moves into production

Single-prompt chatbots have given way to coordinated agents that hand off tasks. The pattern is now common in production: a planner agent decomposes a request, executor agents run the steps, and a verifier agent checks the output before returning to the user.

What's making this viable now is the reliability work that wasn't there a year ago: agent-level tracing, deterministic tool calls, retry policies, and evaluation harnesses that catch regressions before they hit users. The orchestration patterns enterprises are converging on:

- Planner / executor splits for complex workflows where one model decides the plan and cheaper models run the steps.

- Parallel agents for tasks that fan out (research across sources, batch document processing, multi-channel customer triage).

- Verifier agents that grade outputs against a rubric before they're shown to users or downstream systems.

The teams shipping multi-agent systems reliably are the ones who treat orchestration as a software engineering problem, not a prompt engineering problem.

MCP becomes the interop standard for tools and data

Anthropic's Model Context Protocol has become the de facto way enterprises connect AI agents to internal tools, databases, and SaaS systems. MCP servers replace the bespoke integration glue that defined 2024-era AI projects, and they're displacing the GPT Store / plugin marketplace pattern that never quite landed in the enterprise.

Why MCP took hold where plugin marketplaces didn't:

- It's a protocol, not a marketplace. Enterprises can run MCP servers on their own infrastructure, behind their own auth, with their own audit logs. No vendor gatekeeping.

- Tool definitions are portable. An MCP server written for one model client works with others. That breaks the lock-in pattern that made buyers wary of plugin ecosystems.

- It maps cleanly to existing security reviews. An MCP server is a service; security teams already know how to evaluate services.

The pattern that has emerged: enterprises are building internal MCP servers for their core systems (CRM, ticketing, data warehouse) and exposing them to multiple AI clients rather than committing to one vendor's tool ecosystem.

AI governance and compliance as a procurement blocker

Legal, security, and risk now sit upstream of AI vendor selection, not downstream. The EU AI Act, NIST AI RMF, and an expanding catalogue of vendor questionnaires have moved governance from "we'll figure it out post-purchase" to a hard gate.

What this looks like in practice:

- Pre-purchase risk classification. Buyers categorise the intended use under EU AI Act risk tiers before issuing an RFP. High-risk use cases trigger documentation requirements that some vendors can't meet.

- Model and data lineage requirements. Procurement asks where training data came from, what the retention policy is, and what audit logs the vendor will produce. "Trust us" is no longer an answer.

- Internal AI committees that review use cases before any spend is approved. These committees typically include legal, security, data governance, and a business sponsor; their default answer is "not yet."

The practical effect: buying cycles are longer, but the projects that survive them are more likely to make it to production.

Build-vs-buy reassessment driven by model commoditisation

When frontier-class capability is available cheaply from any of three vendors, the strategic question shifts. It is no longer "which model platform do we standardise on?" It's "which parts of this stack should we own, and which should we rent?"

The factors enterprises are weighing:

- Reasoning models change the math. Capabilities that required custom fine-tuning two years ago now work zero-shot with a strong reasoning model. That shrinks the case for owning a model.

- Switching costs are lower than they look. With MCP and standardised inference APIs, the lock-in lives in prompts, evals, and orchestration code, not in the model itself. Many enterprises are designing for portability from day one.

- Fine-tuning costs have dropped, but so has the case for it. Strong base models with good retrieval often beat a fine-tuned smaller model on cost-adjusted performance.

The pattern: enterprises rent frontier capability, own the orchestration layer, and own anything that touches proprietary data.

Document extraction matures into production IDP

Document processing was an obvious early use case for LLMs, and it has matured. Multimodal models now read structured PDFs, scanned forms, and mixed-content documents reliably enough that intelligent document processing is a production workload, not a pilot. The competitive landscape has moved from "can we extract this field?" to "can we run extraction at enterprise volume with the audit trail compliance requires?"

What's changed since 2024:

- Multimodal models are the default extraction engine. Specialised document models still have a role, but most enterprise IDP pipelines now route to a general multimodal model with task-specific prompting and validation.

- Validation and human-in-the-loop steps are part of the pipeline. Production IDP isn't model-only; it's model plus structured validation plus selective human review on low-confidence outputs.

- The integration question dominates. The model is rarely the bottleneck; getting the extracted data into the systems of record (ERP, claims, case management) is.

See intelligent document processing solutions for the production patterns that have settled out.

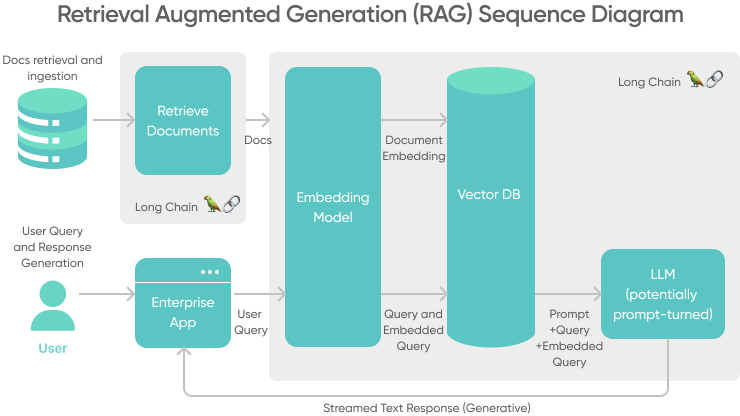

Retrieval moves from static RAG to agentic retrieval

RAG is now standard practice; the trend is what comes after it. Static vector-database RAG, where every query embeds and searches the same index, is giving way to agentic retrieval: agents that decide which tools to query, iterate on their search strategy, and combine results from structured systems, unstructured stores, and live APIs.

The shift in production retrieval:

- Tool-augmented retrieval. Agents call SQL, search APIs, vector stores, and MCP-exposed services in the same workflow, choosing based on the question.

- Iterative refinement. Instead of a single retrieval pass, agents reformulate queries, expand or narrow scope, and verify results before generating an answer.

- Source attribution as a first-class output. Production systems cite sources by default; this is now a procurement requirement, not a nice-to-have.

Human-in-the-loop tightens around the high-risk steps

Human-in-the-loop is no longer a blanket policy ("a human checks every output"); it's a targeted intervention at the points where the cost of being wrong is highest. The teams running HITL well today instrument their pipelines, identify the low-confidence or high-impact decisions, and route only those to humans.

Two patterns have stabilised:

- Tuning and evaluation. Humans label, grade, and curate the eval set. The eval set, not the model, is the durable asset.

- In-production review on confidence triggers. The system itself decides when to escalate, based on calibrated confidence scores or business rules. Humans handle the long tail; the model handles the head.

Customer service moves from chatbots to action-taking agents

Customer service is the use case where "agent" stopped being a buzzword. The successful deployments aren't bots that answer questions; they're agents that take action (issue refunds, update orders, escalate cases) with full audit trails and reversibility.

What this requires that 2024-era chatbots didn't:

- Real tool access through MCP or equivalent, with scoped permissions per agent.

- Reversibility and audit logs. Every action the agent takes is logged with the reasoning that led to it, so support leads can review and intervene.

- Confidence-based handoff. The agent handles tier-1 autonomously, escalates tier-2 with full context, and never pretends to be human.

The economic case is now well-documented: a well-built agent handles the volume that previously required a tier-1 team, and quality measurably improves because every action is logged and auditable.

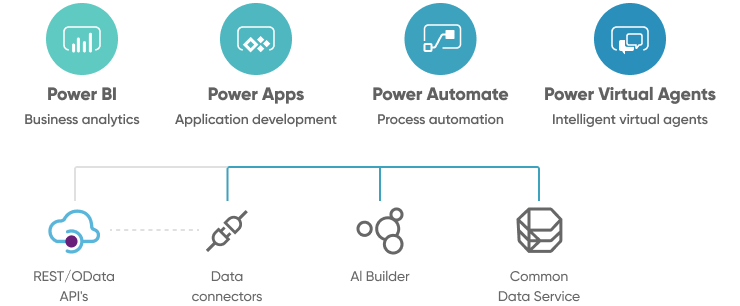

Agent orchestration platforms displace early process automation

Process automation was supposed to be the killer enterprise AI app. The "platform vs. islands of automation" problem from the early wave didn't go away, but the platforms changed. Microsoft Power Platform and the established RPA vendors are still relevant, but the new layer is agent orchestration: platforms designed for coordinating LLM-driven agents across systems, not for scripting deterministic workflows.

What enterprises are choosing between:

- Established automation platforms with bolted-on AI features. These are safe, well-governed, and limited to workflows the underlying engine can express.

- Agent orchestration platforms designed AI-first, with built-in tracing, evaluation, and tool registries. These are more flexible but newer, with less mature governance tooling.

- Custom orchestration on a model SDK. Most enterprises end up here for their high-value workflows, because the off-the-shelf platforms can't yet match what a small team can build with direct SDK access.

The AI hire profile narrows to "ships agents in production"

Competition for AI talent hasn't slowed; it has refocused. The generalist ML engineer is no longer the rare hire. The rare hire now is someone who has shipped a production agent, can write evaluation harnesses, and understands the operational disciplines (tracing, cost monitoring, prompt versioning) that make AI systems reliable.

How enterprises are responding:

- Upskilling existing senior engineers rather than hiring net-new ML specialists. A strong backend engineer who learns the agent stack is often more useful than a research-track ML hire.

- Project-based engagement with specialist firms for the architecture-defining work, with internal teams owning steady-state operations.

- Tightening the JD. "Worked with LLMs" no longer differentiates a candidate; "shipped an agent system to production with evals and observability" does.

AI-native development tooling becomes a hiring filter

Workforce training has shifted from "everyone needs an AI awareness course" to a more pointed question: can your engineers ship using AI-native tooling? Claude Code, Cursor, and Copilot are no longer optional; they're a productivity multiplier, and proficiency with them is showing up in interview loops and performance reviews.

The implications:

- Hiring signals have changed. Engineers who use AI tooling fluently ship measurably more in measurably less time. That gap shows up in technical interviews now.

- Internal tooling investment. Enterprises are standardising on a small set of AI-native dev tools, providing seats, and building internal patterns around them rather than letting each team pick.

- The training question is narrower. Teams don't need a generic "intro to AI" course; they need hands-on practice shipping with the tools the company has chosen.

Conclusion

The throughline of these 12 trends: AI in the enterprise has moved from capability exploration to operational discipline. Multi-agent orchestration, MCP, governance gates, model commoditisation, and AI-native engineering tooling are the structural changes that matter now. The trends that defined 2024 are now table stakes: AI embedded in enterprise software, multimodal as a baseline, generative AI as a default option.

If you're building or rearchitecting AI systems for production, our generative AI development company partners with enterprises on the architecture, governance, and orchestration decisions that determine whether AI workloads scale or stall. Book a consultation to walk through model selection, agent design, MCP integration, and the evaluation harness that lets you ship without surprises.

![2026 Guide to ChatGPT Enterprise [Benefits, Risks & How It Compares]](/uploads/blog/chatgpt-enterprise/chatgpt-enterprise.png)